The IPOX® Watch - Pre-IPO Analysis: Cerebras

COMPANY DESCRIPTION

Founded in 2015 by former SeaMicro executives Andrew Feldman, Gary Lauterbach, Michael James, Sean Lie, and Jean-Philippe Fricker, Cerebras Systems Inc. is a Sunnyvale, California–based AI infrastructure company that builds large-scale systems for training and running AI models. Its flagship CS-3 is powered by the Wafer-Scale Engine 3 (WSE-3) — the largest AI chip ever built, with 4 trillion transistors across a single silicon wafer, delivering 125 petaflops of compute and 28× more processing power than an NVIDIA H100. Commercial validation includes OpenAI, AWS, Meta, GSK, Mayo Clinic, and major sovereign-AI institutions. Backed by a $1.0B Series H led by Tiger Global at a roughly $23B valuation, Cerebras is one of the most visible alternatives to Nvidia in the AI infrastructure IPO market.

BUSINESS MODEL

Cerebras combines hardware sales with cloud-based AI services. The company sells complete CS-3 systems to enterprises, research institutions, and sovereign-AI customers; offers pay-per-use AI inference through its cloud; signs multi-year capacity agreements with strategic customers, including a 750 MW deal with OpenAI; and distributes through partners such as AWS Bedrock and Meta's Llama API. Revenue grew 75.7% from $290.3M in 2024 to $510.0M in 2025, while contracted backlog — future revenue from signed customer agreements — reached approximately $24.6B. Gross margin was 39.0%, with a $145.9M operating loss and a free cash flow loss of $392.8M as Cerebras invested heavily to scale capacity.

IPO

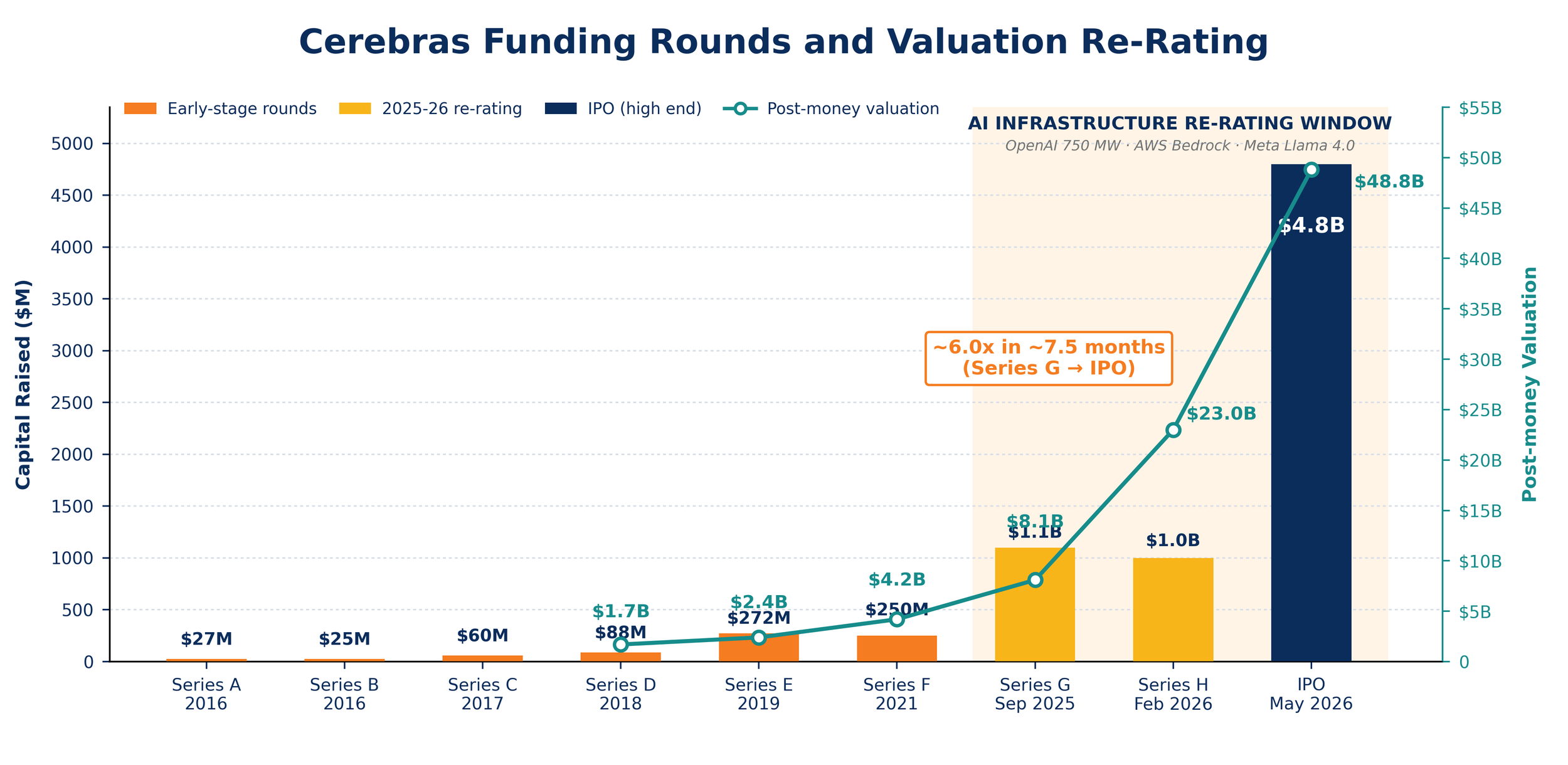

Cerebras has formally relaunched its IPO after an earlier process was delayed by a U.S. national-security review tied to G42's investment and Middle East customer relationships, then withdrawn in October 2025. On May 4, 2026, Cerebras began marketing 28.0 million Class A shares at an indicated range of $150–$160 per share; at the high end, the offering would raise approximately $4.8B at a valuation of roughly $49B. Cerebras has applied to list on Nasdaq under the ticker CBRS, with Morgan Stanley, Citigroup, Barclays, and UBS Investment Bank serving as lead bookrunners. The timing is deliberate: as IPOX noted publicly, there is an active race to complete deals ahead of SpaceX, whose anticipated IPO is expected to absorb significant investor attention and capital when it arrives.

RISKS

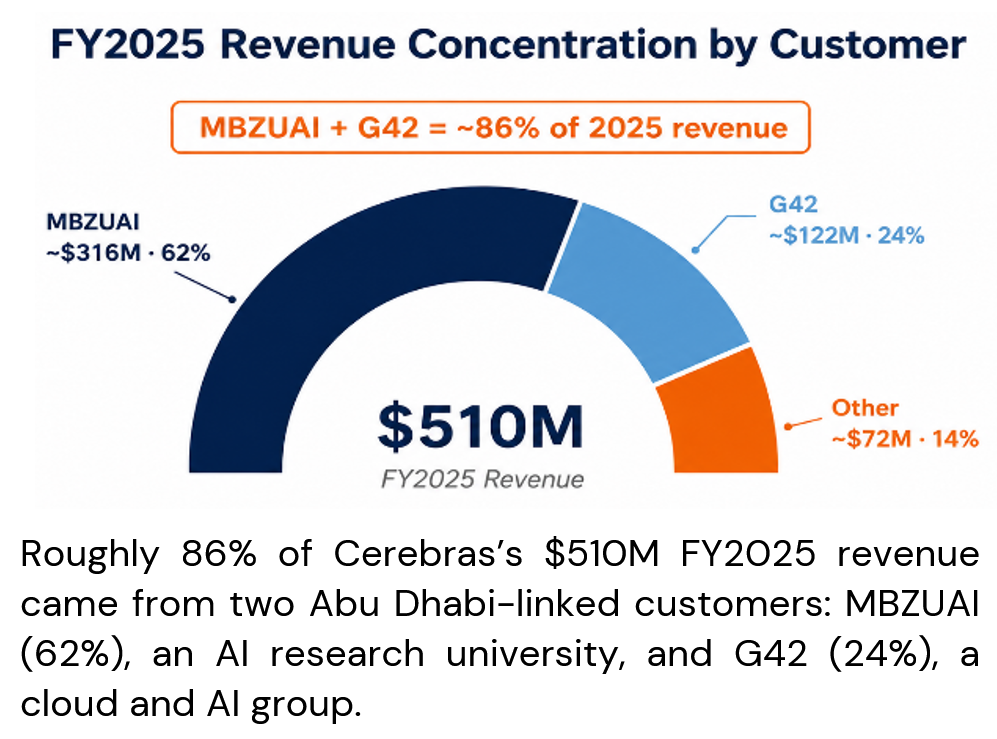

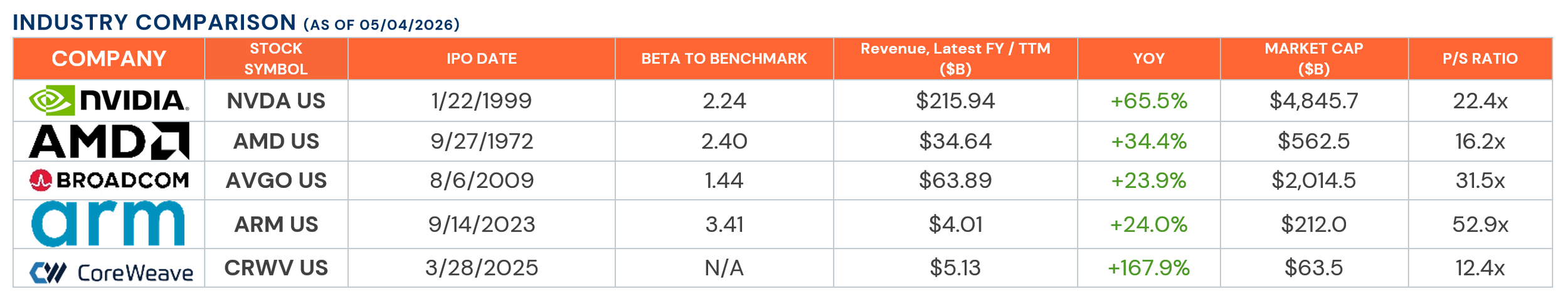

Cerebras's central risk is that the IPO valuation prices in years of future AI demand before the company has proven it can generate cash from a diversified customer base. Customer concentration is the most immediate concern: two Abu Dhabi–linked customers — MBZUAI, an AI research university, and G42, an AI and cloud computing group — together accounted for roughly 86% of 2025 revenue. This exposes Cerebras to geopolitical sensitivity, prior U.S. national-security scrutiny, and potential export-control risk. Backlog conversion is the next major risk: only about 15% of the $24.6B contracted backlog is expected to be recognized through 2027, with the rest dependent on data-center capacity, power availability, and customer acceptance. Cerebras also faces intense competition from Nvidia, AMD, and the custom AI chips being developed in-house by Google, AWS, and Microsoft. At roughly 52 times trailing revenue, the IPO valuation leaves limited room for execution mistakes.

INNOVATIONS

Cerebras's core innovation is wafer-scale computing: by keeping the entire AI processor on a single piece of silicon rather than spreading the work across thousands of GPUs, data moves less and inference runs faster — increasingly important as AI workloads shift from training toward real-time applications like coding agents, copilots, and interactive assistants. Cerebras holds proprietary patents specifically covering wafer-scale integration, on-chip mesh routing, and deep-learning acceleration hardware — IP that competitors would face substantial engineering and legal hurdles to replicate. Recent partnerships validate the commercial relevance: OpenAI announced a 750 MW deployment, AWS made the CS-3 available through Bedrock, and Meta selected Cerebras for the Llama API.